|

Zhihao Yang

Hello! Welcome to my homepage. |

|

Publications |

First Author |

|

|

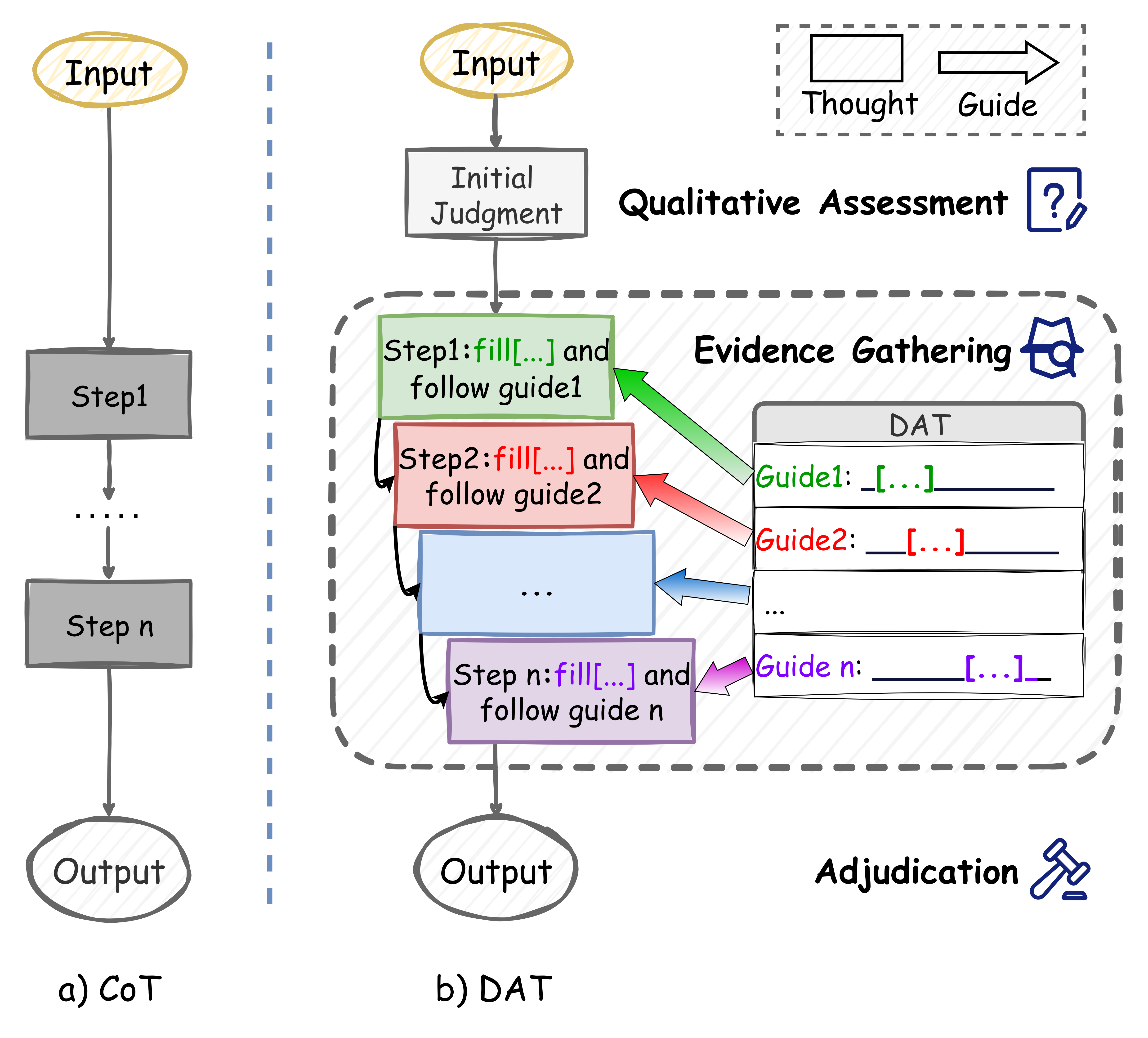

Structuring Reasoning for Complex Rules Beyond Flat Representations

arXiv, 2025 [arXiv] An approach which equips LLMs with a structured template to methodically gather and verify evidence within complex rule systems, drastically improving reasoning fidelity. |

|

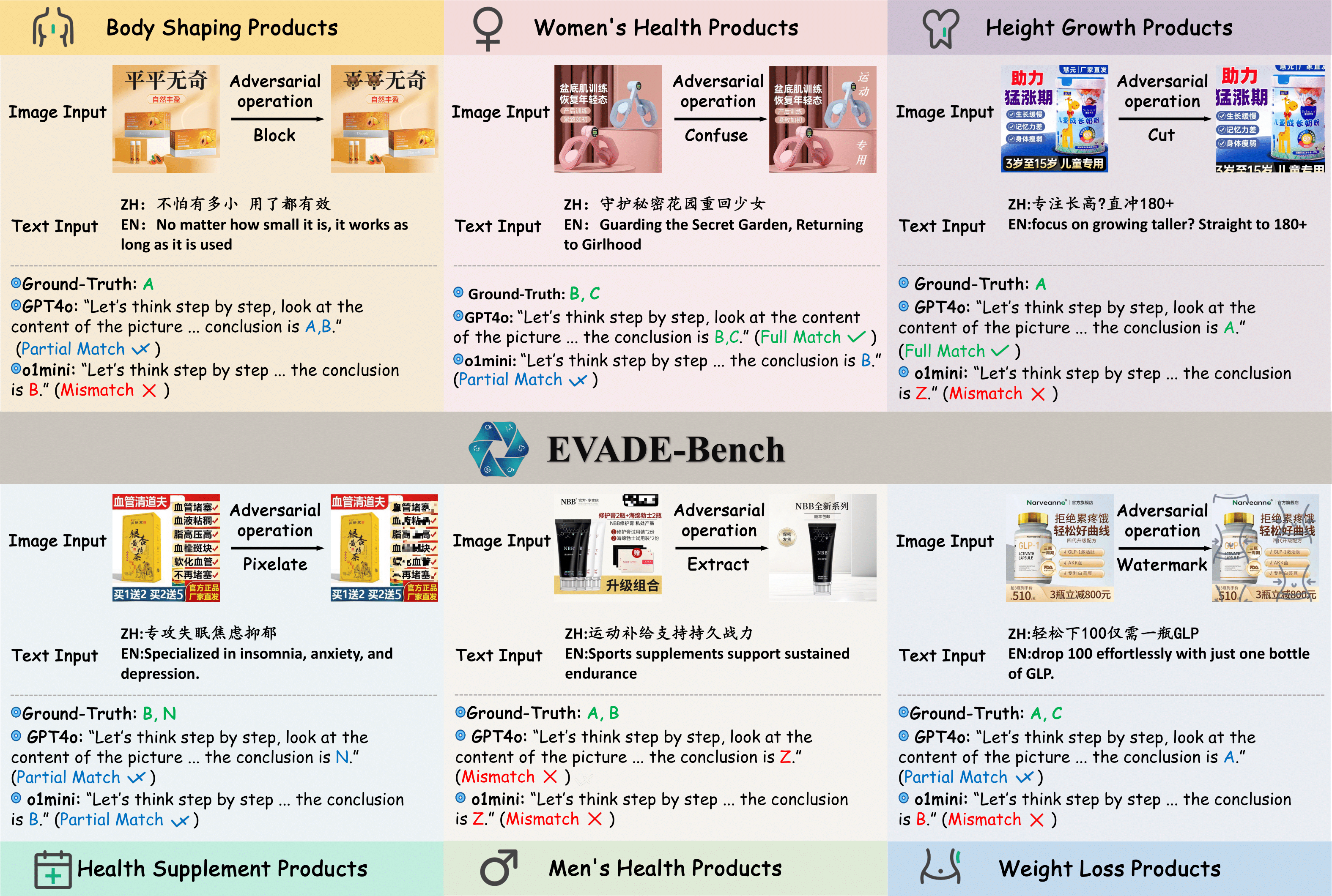

Evade: Multimodal benchmark for evasive content detection in e-commerce applications

SIGIR 2026 (CCF-A) [project page] [arXiv] EVADE-Bench introduces a multimodal benchmark to evaluate evasive content detection and provides a strong foundation for developing more robust detection systems. |

Co-authored Papers |

|

|

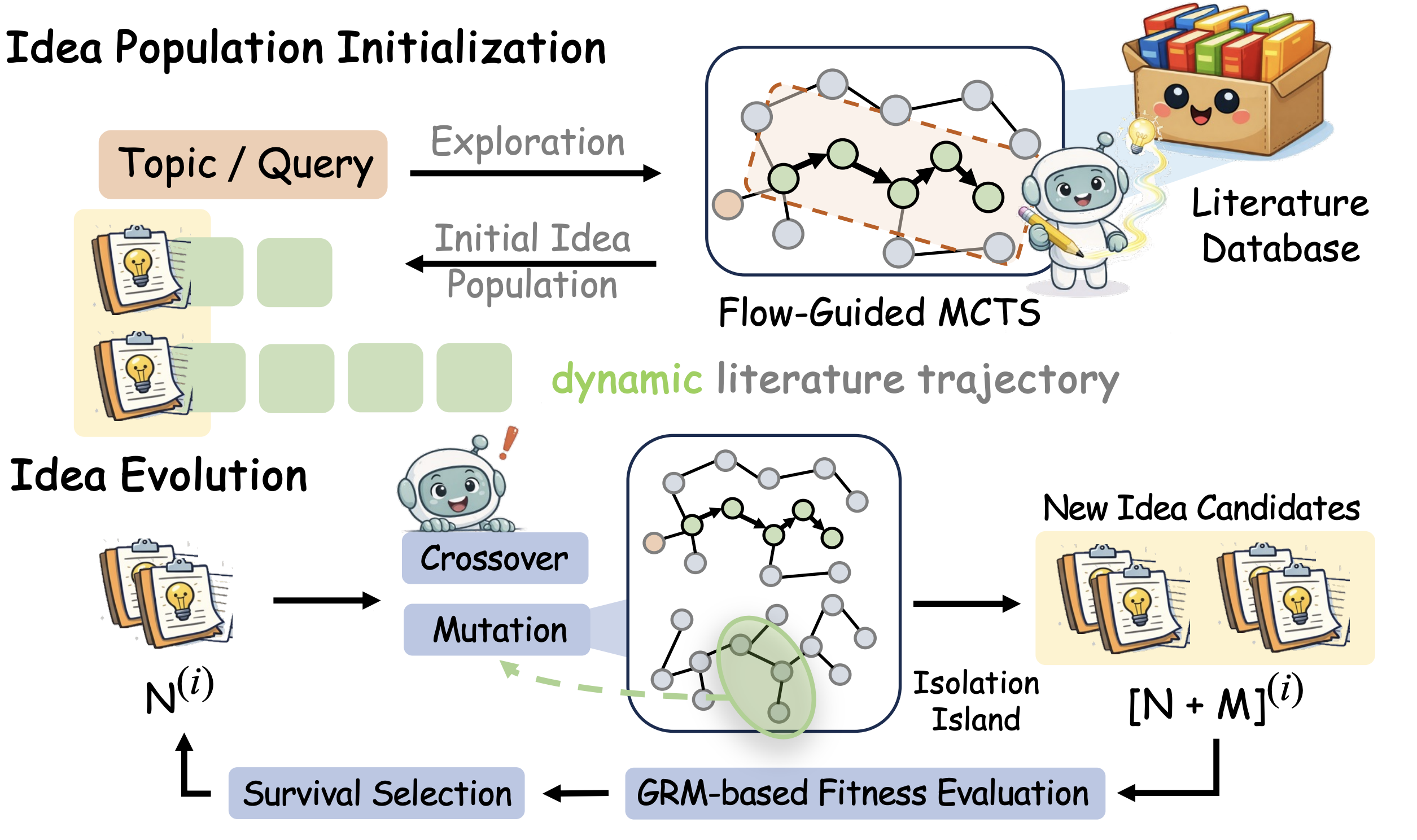

FlowPIE: Test-Time Scientific Idea Evolution with Flow-Guided Literature Exploration

arXiv, 2026. [arXiv] FlowPIE tightly couples literature retrieval with idea generation through flow-guided search and test-time evolution, producing research ideas that are more novel, diverse, and feasible than strong LLM- and agent-based baselines. |

|

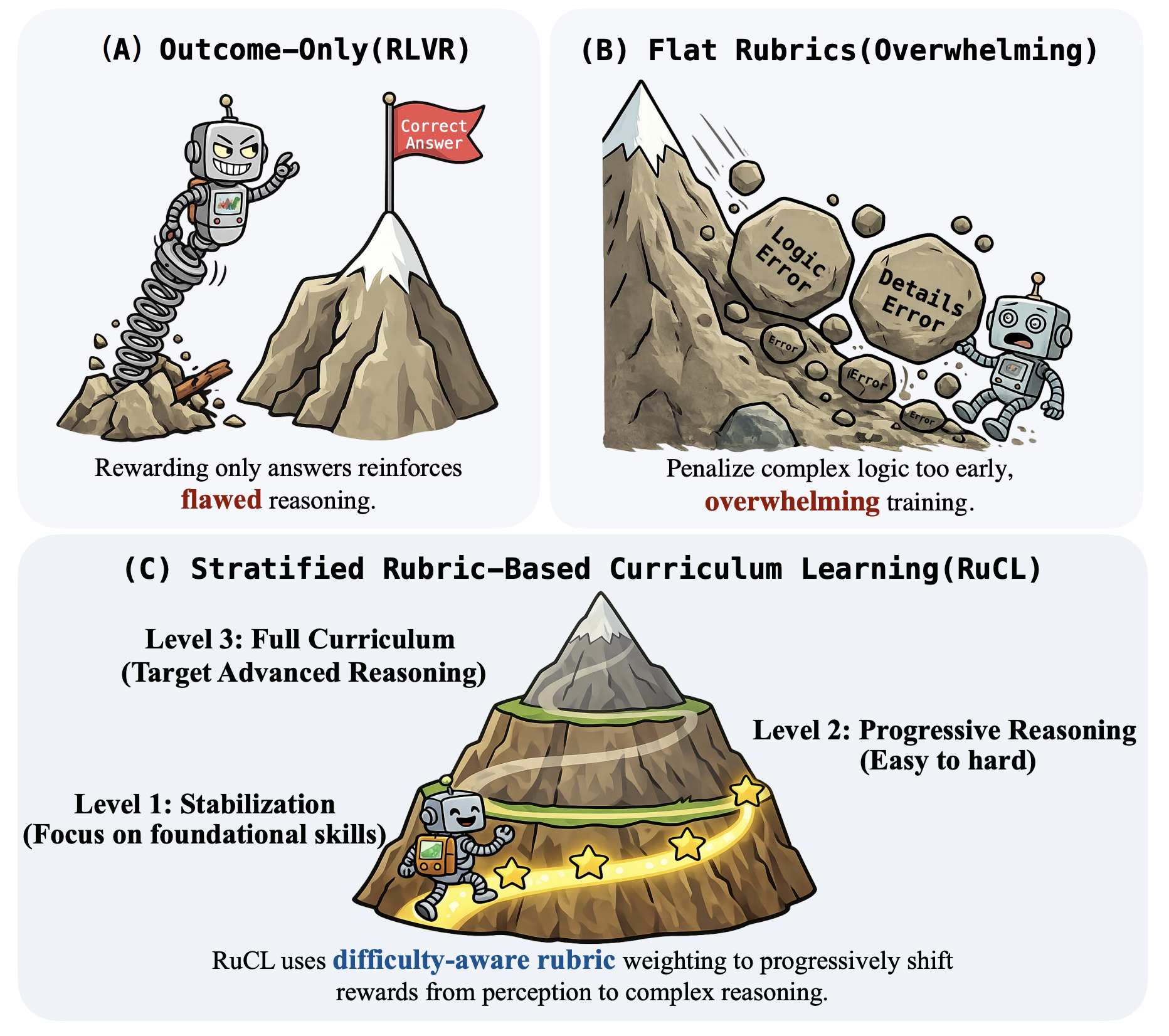

RuCL: Stratified Rubric-Based Curriculum Learning for Multimodal Large Language Model Reasoning

ICML 2026 (CCF-A) [arXiv] RuCL reframes curriculum learning around reward design by stratifying rubrics according to model competence, then dynamically reweighting them to progressively improve multimodal reasoning from perception to higher-order logic. |

Working Experience |

|

|